IEEE 802.1Q Tunneling

By stretch | Monday, July 12, 2010 at 3:46 a.m. UTC

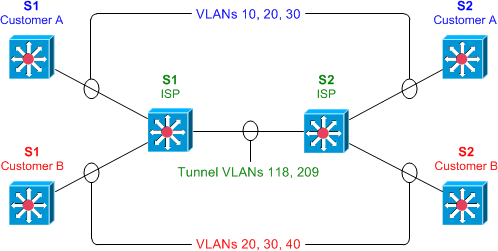

IEEE 802.1Q tunneling can be used to achieve simple layer two VPN connectivity between sites by encapsulating one 802.1Q trunk inside another. The topology below illustrates a common scenario where 802.1Q (or "QinQ") tunneling can be very useful.

A service provider has infrastructure connecting two sites at layer two, and desires to provide its customers transparent layer two connectivity. A less-than-ideal solution would be to assign each customer a range of VLANs it may use. However, this is very limiting, both in that it removes the customers' flexibility to choose their own VLAN numbers, and there may not be enough VLAN numbers (we can only use a maximum of 4,094 or so) available on large networks.

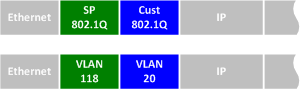

802.1Q tunneling solves both of these issues by assigning each customer a single VLAN number, chosen by the service provider. Within each customer VLAN exists a secondary 802.1Q trunk, which is controlled by the customer. Each customer packet traversing the service provider network is tagged twice: the inner-most 802.1Q header contains the customer-chosen VLAN ID, and the outer-most header contains the VLAN ID assigned to the customer by the service provider.

802.1Q Tunnel Configuration

Before we get started with the configuration, we must verify that all of our switches support the necessary maximum transmission unit (MTU), 1504 bytes. We can use the command show system mtu to check this, and the global configuration command system mtu to modify the device MTU if necessary (note that a reload will be required for the new MTU to take effect).

S1# show system mtu System MTU size is 1500 bytes S1# configure terminal S1(config)# system mtu 1504 Changes to the System MTU will not take effect until the next reload is done.

Next, we'll configure our backbone trunk to carry the top-level VLANs for customers A and B, which have been assigned VLANs 118 and 209, respectively. We configure a normal 802.1Q trunk on both ISP switches. The last configuration line below restricts the trunk to carrying only VLANs 118 and 209; this is an optional step.

S1(config)# interface f0/13 S1(config-if)# switchport trunk encapsulation dot1q S1(config-if)# switchport mode trunk S1(config-if)# switchport trunk allowed vlan 118,209

S2(config)# interface f0/13 S2(config-if)# switchport trunk encapsulation dot1q S2(config-if)# switchport mode trunk S2(config-if)# switchport trunk allowed vlan 118,209

Now for the interesting bit: the customer-facing interfaces. We assign each interface to the appropriate upper-level (service provider) VLAN, and its operational mode to dot1q-tunnel. We'll also enable layer two protocol tunneling to transparently carry CDP and other layer two protocols between the CPE devices.

S1(config)# interface f0/1 S1(config-if)# switchport access vlan 118 S1(config-if)# switchport mode dot1q-tunnel S1(config-if)# l2protocol-tunnel S1(config-if)# interface f0/3 S1(config-if)# switchport access vlan 209 S1(config-if)# switchport mode dot1q-tunnel S1(config-if)# l2protocol-tunnel

S2(config)# interface f0/2 S2(config-if)# switchport access vlan 118 S2(config-if)# switchport mode dot1q-tunnel S2(config-if)# l2protocol-tunnel S2(config-if)# interface f0/4 S2(config-if)# switchport access vlan 209 S2(config-if)# switchport mode dot1q-tunnel S2(config-if)# l2protocol-tunnel

We can use the command show dot1q-tunnel on the ISP switches to get a list of all interfaces configured as 802.1Q tunnels:

S1# show dot1q-tunnel dot1q-tunnel mode LAN Port(s) ----------------------------- Fa0/1 Fa0/3

Now that our tunnel configurations have been completed, each customer VLAN has transparent end-to-end connectivity between sites. This packet capture shows how customer traffic is double-encapsulated inside two 802.1Q headers along the ISP backbone. Any traffic left untagged by the customer (i.e., traffic in the native VLAN 1) is tagged only once, by the service provider.

Posted in Switching

Comments

July 12, 2010 at 5:22 a.m. UTC

Really interesting post! Never even though of this. Keep them coming.

July 12, 2010 at 11:54 a.m. UTC

Great post, took me a while the first time I read about dotQ tunneling to wrap my head around it. Wish I had seen your article first, very concise description.

July 12, 2010 at 12:01 p.m. UTC

We also used the function second-dot1q the config looked something like this:

interface fastethernet0.19 encapsulation dot1q 9 second-dot1q 20

I don't know the syntax exactly because it is some time ago that I worked for this service provider..

July 12, 2010 at 2:09 p.m. UTC

Nice Article, and I always enjoy reading your blog. Makes me wonder, though, if an SP using this would run into issues with CAM Table Overflows since in reality it would need to support so many more VLANs and MACs. Say you have 4000 customers using this and each has 100 vlans. That's like having traffic and MACs for 400,000 VLANs running through the SP Network!

Aaron

My Blog

July 12, 2010 at 3:23 p.m. UTC

Thanks for the nice article on Q-in-Q tagging.

I've been aware of Q-in-Q tagging for a while now, but I've never had a need for it yet. Now, I have an example working config and thus more than an theoretical understanding.

Time to bookmark and file this one away until I need it. :-)

Grant. . . .

July 12, 2010 at 4:09 p.m. UTC

@AaronDhiman

Yes, you have the option of either restricting the number of MAC addresses you learn on each customer port, taking a crap shot and hoping you don't go over, or you move to more robust offerings like EoMPLS.

July 12, 2010 at 7:26 p.m. UTC

Great article! I never heard about this before....

Thanks.

July 12, 2010 at 10:24 p.m. UTC

Quick Question,

If the customer is not trunking to the SP, how are they suppose to place a tag on the frame before it gets to the SP switch?

July 12, 2010 at 10:52 p.m. UTC

NVM, I think I got it now.

The customer is configured to trunk, and when the SP gets the tagged frame, it slaps a tag ontop of it.

The customer is configured to trunk, just not with the SP.

July 13, 2010 at 2:18 a.m. UTC

Thanks for the great writeup Stretchman!

Btw, why is it necessary to increase the MTU size to 1504? Is it because of the 2nd VLAN header (4 bytes)? If so, what if there was a 3rd tag and beyond (wonder what's the maximum VLAN tag an ethernet frame can hold)?

July 13, 2010 at 2:43 a.m. UTC

This looks really fun, I'm going to have to go practice this and play around with it.

July 13, 2010 at 4:05 a.m. UTC

The initial sentence mentions getting this across a VPN but the configs show switches - how do you get this through IPSEC tunnels between a router / PIX & router / ASA?

My first thought is that I'd like to expand my RSPAN VLAN and include a couple of remote sites... is that a workable idea?

July 13, 2010 at 4:13 a.m. UTC

@dantel: The term "VPN" applies to any type of virtualized, private network. In this case, we're talking about VLANs.

July 13, 2010 at 1:17 p.m. UTC

Really good post! I have heard about QinQ, but now everything is clear.

July 13, 2010 at 3:57 p.m. UTC

Very interesting and clear explanation, keep up the good work Stretch :-)

July 14, 2010 at 7:42 p.m. UTC

Thanks a lot for the information ..this is now to me ....interesting part is that the scenario is understandable with example....

July 14, 2010 at 9:05 p.m. UTC

Hi, isn't this the same as QinQ and if yes - aren't you suppose to give an external vlan(which i guess is the default vlan for the interface) and then an inner vlan?

July 17, 2010 at 4:19 p.m. UTC

I must say fantastic.. I've already read enough on the topic of VLAN but this article really helps me to think from other perspective.

July 18, 2010 at 2:59 a.m. UTC

@Geert

I think you're thinking of QinQ tag termination on a layer 3 interface. That just drops the frames off in a different place.

@AaronDhiman

PBB-TE or EoMPLS would work better if you had to scale to that size.

@lcptek

Yes, the MTU for all of the Service Providers switches along the path need to have MTU's large enough to accommodate the extra size. Whether its 1518, 2000, 9216 (or whatever).

Also, you can QinQinQ (or maybe even more) as long as there is enough MTU.

August 3, 2010 at 3:45 p.m. UTC

Great post. We will be implementing this soon. Wondered how it will be configured! This explains a lot. Thank you!

July 10, 2011 at 5:24 p.m. UTC

Bless you man! You made it soooooo simple. Been reading this in different materials and never got a hold of it...Now i can teach anyone!

Thanks.

August 19, 2011 at 1:52 p.m. UTC

802.1q tunnel made simple, practice immediately...Thanks again

November 23, 2011 at 3:33 a.m. UTC

How Does it Work, When we are doing point-pont Etherchannel..? is there any delay ???

November 30, 2011 at 7:47 p.m. UTC

Very good and helpful article on 802.1q tunneling...

Thank you for sharing your valuable knowledge.Hope you will continue your good work...

May 24, 2012 at 12:02 p.m. UTC

Such a simple explanation...very easy to grasp...thanx a lot.........all the best..

June 26, 2012 at 5:04 p.m. UTC

On the customer edge device, do you need any configuration other than the following?

S1 Customer A

switchport mode trunk

switchport trunk allowed vlan x,y,z

S2 Customer A

switchport mode trunk

switchport trunk allowed vlan x,y,z

For example, a SVI for VLAN x,y,z?

November 12, 2012 at 7:05 a.m. UTC

It is Good.

Can you tell us how this actually happens i mean how forwarding/Learning of frame happens. Will the SP switches need to know all the MAC address of customer devices for forwading.

Can you tell us more on it.

Regards

Farooq

November 21, 2012 at 7:24 a.m. UTC

how many vlans could be stacked into tunnel ?

December 9, 2012 at 11:35 a.m. UTC

nice,

thank you very much for your post it is very interesting.

Best Regards Mohamed Abdel Rahman

October 21, 2014 at 5:34 p.m. UTC

Hi! great article. Maybe is too late (4 years since article was published) but I can see in the attached capture that the ethertype code of the ethernet header is set to 0x8100, which means regular dot1q encapsulation. I have some doubts here, It shouldn't be 0x9100 for QinQ encapsulation?

Thanks for your great blog! Kind regards.

Nacho.

November 4, 2014 at 11:46 p.m. UTC

great

January 21, 2015 at 2:20 p.m. UTC

For the folks asking above about maximum nesting, it's worth knowing that q-in-q tunneling is NOT done by encapsulation like a VPN. The ethertype is changed to a magic value, and the new vlan tag is added. This means that you can't do q-in-q-in-q (etc) unless each layer has a configurable ethertype (which some switches can, but not lower-end Cisco like 3650/3750).