Nexus 2200 FEX Configuration

By stretch | Thursday, March 29, 2012 at 2:20 a.m. UTC

In preparation for a major datacenter deployment, I've been re-familiarizing myself with Cisco's Nexus platform (and naturally, what I pick up on the job will make its way onto the blog). Today's lab involved attaching a Nexus 2200 fabric extender, or FEX, to a Nexus 5500.

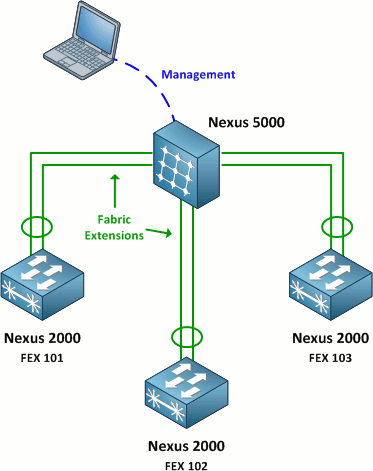

For those unfamiliar with this setup, a Nexus 2000 is essentially a standalone line module: It requires connectivity to a Nexus 5000 switch to function. All interface configuration is performed on the Nexus 5000, where every attached Nexus 2000 is treated as an individual slot. For example, port 8 on FEX 101 is referred to as Ethernet101/1/8 on the 5000. This is very similar in concept to stacking Catalyst 2960S or 3750 switches, except that the 2000s are connected to a 5000 via 10 Gbps Ethernet rather than via a proprietary (and short) stacking cables.

The intended datacenter design is to deploy many Nexus 2000s as top-of-rack (ToR) switches and connect them back to Nexus 5000s at the end or middle of the row. This looks great on paper, however the major drawback is that Nexus 2000s don't do any local switching: A packet from port 1 on a 2000 to port 2 on the same switch must travel up to the 5000 and back. This may or may not be a problem, depending on your oversubscription rates and latency requirements.

With that said, let's look at how to connect a Nexus 2200 to a Nexus 5500.

Nexus 5500 Configuration

Before beginning, ensure that the FEX feature is enabled with the command show feature. If not, enable it.

switch(config)# feature fex

When the FEX is physically connected, the Nexus 5500 should automatically detect its presence even before the interfaces have been provisioned:

switch# show fex FEX FEX FEX FEX Number Description State Model Serial ------------------------------------------------------------------------ --- -------- Discovered N2K-C2248TP-1GE SSI15470K7A

Since we're using two 10 Gbps Ethernet links to attach the FEX, we'll configure them as a single 20 Gbps port-channel. There's nothing new about this configuration, however it is worth noting that a port-channel configured for fabric extension cannot run LACP. The default mode is "on."

switch(config)# interface e1/1 - 2 switch(config-if-range)# channel-group 101

Nexus Ethernet interfaces can operate in one of three modes: access, trunk, or FEX-fabric. You should already be familiar with the first two modes. The third mode enables fabric extension to a Nexus 2000. We configure the port-channel interface to operate in FEX-fabric mode, and then associate the attached FEX by assigning it a number between 100 and 199:

switch(config)# interface po101 switch(config-if)# switchport mode fex-fabric switch(config-if)# fex associate 101

This is actually all that's needed to associate a Nexus 2000 with its parent 5000. If all goes well, the 5000 should take control of the 2000 and upgrade the FEX's software to match itself if necessary. The command show fex reveals that our new FEX is undergoing a software upgrade. This process can take several minutes and will be followed by a reboot.

switch# show fex FEX FEX FEX FEX Number Description State Model Serial ------------------------------------------------------------------------ 101 FEX0101 Image Download N2K-C2248TP-1GE SSI15470K7A

The command show fex detail provides more information. We can see that the Nexus 2200 shipped with NX-OS 4.2(1)N1(1) and is being upgraded to match NX-OS 5.0(3)N2(1) running on the 5500.

switch# show fex detail

FEX: 101 Description: FEX0101 state: Image Download

FEX version: 4.2(1)N1(1) [Switch version: 5.0(3)N2(1)]

FEX Interim version: 4.2(1)N1(0.002)

Switch Interim version: 5.0(3)N2(1)

Module Sw Gen: 12594 [Switch Sw Gen: 21]

post level: complete

pinning-mode: static Max-links: 1

Fabric port for control traffic: Eth1/1

Fabric interface state:

Po101 - Interface Up. State: Active

Eth1/1 - Interface Up. State: Active

Eth1/2 - Interface Up. State: Active

Fex Port State Fabric Port

Logs:

03/28/2012 19:49:08.622981: Module register received

03/28/2012 19:49:08.623910: Image Version Mismatch

03/28/2012 19:49:08.624170: Registration response sent

03/28/2012 19:49:08.624406: Requesting satellite to download image

After the FEX reboots, we can see that it is now running the same NX-OS version as its parent:

switch# show fex detail

FEX: 101 Description: FEX0101 state: Online

FEX version: 5.0(3)N2(1) [Switch version: 5.0(3)N2(1)]

FEX Interim version: 5.0(3)N2(1)

Switch Interim version: 5.0(3)N2(1)

Extender Model: N2K-C2248TP-1GE, Extender Serial: FOC160411LY

Part No: 73-13232-01

Card Id: 99, Mac Addr: 2c:36:f8:36:be:82, Num Macs: 64

Module Sw Gen: 12594 [Switch Sw Gen: 21]

post level: complete

pinning-mode: static Max-links: 1

Fabric port for control traffic: Eth1/1

Fabric interface state:

Po101 - Interface Up. State: Active

Eth1/1 - Interface Up. State: Active

Eth1/2 - Interface Up. State: Active

Fex Port State Fabric Port

Eth101/1/1 Down Po101

Eth101/1/2 Down Po101

...

At this point, our FEX is fully functional and its interfaces appear as module 101 on the 5500.

switch# show interface status fex 101 -------------------------------------------------------------------------------- Port Name Status Vlan Duplex Speed Type -------------------------------------------------------------------------------- Eth101/1/1 -- notconnec 1 auto auto -- Eth101/1/2 -- notconnec 1 auto auto -- Eth101/1/3 -- notconnec 1 auto auto -- Eth101/1/4 -- notconnec 1 auto auto -- Eth101/1/5 -- notconnec 1 auto auto -- Eth101/1/6 -- notconnec 1 auto auto -- ...

The following configuration is generated automatically:

fex 101 pinning max-links 1 description "FEX0101"

We can enhance this configuration a bit by adding a custom description for the FEX, such as its physical location. We can also explicitly set the hardware type and serial number to ensure that only this specific device is attached as FEX 101.

fex 101 pinning max-links 1 description "LAB1-R204-F101" serial FOC160411LY type N2248T

One small issue I discovered is that the FEX will report two serial numbers: the chassis serial number (included in the "Serial" column of the show fex output) and the module serial number (listed as "Extender Serial" in the output of show fex detail). When specifying a serial number under FEX configuration, use the extender serial number from show fex detail.

Other Commands

Here are some other helpful FEX-related NX-OS commands. You can see that many legacy commands have simply been extended with the fex argument to view information regarding a specific FEX.

- show interface <type> <number> fex-intf

- show interface fex-fabric

- show module fex all | <number>

- show inventory fex all | <number>

- show environment fex all | <number>

- show version fex <number>

- [no] locator-led fex <number>

Posted in Data Center

Comments

March 29, 2012 at 2:57 a.m. UTC

One thing to note about max-pinning, using a value other than 1 causes the switch to "divide" it's ports up between the uplinks. What that means is if you use a max-pinning value of 2, and have 2 uplinks... half your ports will be pinned to each port, with no redundancy available.

March 29, 2012 at 5:07 a.m. UTC

Another cool feature when running Nexus 5000 with the 2000 FEX switches is the redundancy option when using a vPC pair. When you have a vPC pair of 5000's you can split the FEX uplinks between the two 5000's. There is one exception to this rule. That is the 2232PP FEX. This is due to the FCoE support. End hosts that are SAN connected like to know to which SAN fabric they are connected. This means you have to connect all FEX uplinks from a 2232PP to a single 5000 in the vPC pair.

March 29, 2012 at 1:07 p.m. UTC

@itsjustrouting - Does that apply to the latest version NX-OS as well? I thought that was fixed in 5.1.

March 29, 2012 at 2:11 p.m. UTC

@itsjustrouting

While a cool feature, keep an eye out on fex limits, since it will eat up one on both 5ks, as opposed to straight through mode, where you can effectively double your fex count. This is especially harsh if you enable layer-3 and suddenly your limit is 8.

March 29, 2012 at 6:53 p.m. UTC

What are the main reasons for this kind of hardware? Do You have the impression that its really just a datacenter product or also a real alternative for enterprise enviroments?

We have about 150 edge switches (mostly 48 ports, most of them in a stack of 2-3 devices) and our own fiber to the core. The core has about 45 links to aggregation and edge devices.

So a good candidate for this Nexus2200/5000 concept, I guess.

I do see the advantage regarding cli (one to rule them all). But I've also frequently read lots about annoying bugs.

I'd appriciate it, if You could share some personal impressions regarding conflicting mindsets when designing Nexus- and Catalyst-based networks.

March 29, 2012 at 9:31 p.m. UTC

@Tomislav Kolanovic: Layer3 is a bad idea on the 5010 and 5020 series gear, which is what I have deployed. I haven't had the pleasure of the 5548 as of yet. We had already begun our new data center build with all gear already purchased when the 5548 was released. I agree with your assesment of the FEX limitations. But in our environment we were more concerned with network availability. Also, if you use all of the uplinks on the FEX switches, you're pretty much pushing the connection limit anyway.

@Ruisu.Cisco: As far as I know, the 2232PP will always have this limitation due to FCoE. The assumption being that if you have a vPC pair of 5020's, that your fiber channel modules in each switch will connect to a different SAN fabric. The 2232TM is a newer FEX switch that gives 10gb copper connectivity. With this one you can indeed span the vPC pair. This is due to, you guessed it, the lack of FCoE support.

@Kris: The Nexus product line is developed for the data center. For enterprise end-user connectivity, it just doesn't make sense. This is especially true if you have plans for VoIP. There is no PoE support (that I know of ) in the Nexus product line. The FEX switches, as stated in the blog, don't do any local switching. This would imply no support for dCEF. The value-adds for running Nexus gear do not work for end-user computing. Stick with Catalyst gear for that. It is much better suited for that environment. Keep in mind, once you reach about 5 stackable switches, you're around the price point of a 6500 chassis. If you're looking at running CIUS phones or other PoE+ devices, you're going to need something that can provide a lot of PoE.

March 30, 2012 at 7:09 a.m. UTC

As an additional note, the recommended best practice is to configure the FEX connections straight through and to use the vPC connections between the 2200's.

For example:

5k--5k || || 2K 2K \\ / VPC || Host (Etherchannel bond)

Also, note that the 2200 has BPDU guard permanently on ... so no stacking switches below it. It works very reliably if you make sure everything is configured perfectly for the vPC parameters. If you don't, the secondary vPC peer will disable the link (which can result in a false sense of security.)

We use the 2248's with good success, essentially as dual top of rack switches. It gives us the flexibility of a top of rack switch, the central management capabilities of a chassis and surpasses the performance characteristics of doing this with 3750's. There are limitations to what can be done for sure, but for data center applications it does seem to make sense.

For enterprise closets, I don't think this is a good fit for. Speed limitations (5500 does not support 100Mbps), the spanning tree design issues, etc... limit the use. Additionally, these devices put off a lot of heat. They are very dense. (5500 has 48 SFP+ in a 1U box and vents to the top, port-side.) I don't see these being cooled in most enterprise closets.

March 30, 2012 at 6:08 p.m. UTC

@Chris Proctor: We got around the BPDU guard issue by simply turning off BPDU's on the downstream switches. So, I do have at least 3 instances of 4948 switches running downstream of a 2248 FEX switch running in production today.

I have never seen vPC running on the 2200 FEX switches. As they have no intelligence of their own, I never attempted to create a vPC link between them. I must admit, I am a little confused by your diagram.

I other real value to the FEX: when it comes to FCoE, the 2232PP supports FCoE. The 5000's and 2200's were available for FCoE multi-hop was a ratified standard. So, you could spread out your 2232PP FEX switches and avoid FCoE multi-hop because the FEX is part of the same fabric. Now that was cool :)

March 30, 2012 at 6:40 p.m. UTC

Fun Notes:

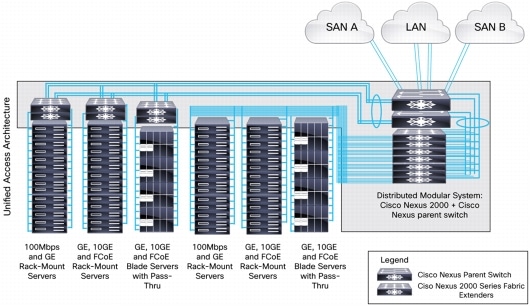

The Cisco Nexus 2000 Series Fabric Extenders comprise a category of data center products designed to simplify data center access architecture and operations. The Cisco Nexus 2000 Series uses the Cisco Fabric Extender architecture to provide a highly scalable unified server-access platform across a range of 100 Megabit Ethernet, Gigabit Ethernet, 10 Gigabit Ethernet, unified fabric, copper and fiber connectivity, rack, and blade server environments.

The platform is ideal to support today's traditional Gigabit Ethernet while allowing transparent migration to 10 Gigabit Ethernet, virtual machine-aware unified fabric technologies.The Cisco Nexus 2000 Series Fabric Extenders behave as remote line cards for a parent Cisco Nexus switch. The fabric extenders are essentially extensions of the parent Cisco Nexus switch fabric, with the fabric extenders and the parent Cisco Nexus switch together forming a distributed modular system. This architecture enables physical topologies with the flexibility and benefits of both top-of-rack (ToR) and end-of-row (EoR) deployments.

Cisco Nexus 2000 Series Deployment Scenarios

The fabric extenders can be used in the following deployment scenarios:

• Rack servers with 100 Megabit Ethernet, Gigabit Ethernet, or 10 Gigabit Ethernet network interface cards (NICs); the fabric extender can be physically located at the top of the rack and the Cisco Nexus parent switch can reside in the middle or at the end of the row, or the fabric extender and the Cisco Nexus parent switch can both reside at the end or middle of the row. • 10 Gigabit Ethernet and FCoE deployments, using servers with converged network adapters (CNAs) for unified fabric environments with the Cisco Nexus 2232PP. • 1/10 Gigabit Ethernet BASE-T server connectivity with ease of migration from 1 to 10GBASE-T and effective reuse of structured cabling. • Server racks with integrated lights-out (iLO) management, with 100 Megabit Ethernet or Gigabit Ethernet management and iLO interfaces. • Gigabit Ethernet and 10 Gigabit Ethernet blade servers with pass-through blades. • Low-latency, high-performance computing environments. • Virtualized access.

Cisco Nexus 2000 Series Fabric Extenders Design Scenarios, from Left to Right:

Cisco Nexus 2000 Series Single Connected to One Upstream Cisco Nexus 5000 Series Switch, Cisco Nexus 2000 Series Dual-Connected to Two Upstream Cisco Nexus 5000 Series Switches, and Cisco Nexus 2000 Series Single-Connected to One Upstream Cisco Nexus 7000 Series Switch

Datacenter Deployment

For More Information • Cisco Nexus 2000 Series Fabric Extenders • Cisco Nexus 5000 Series Switches • Cisco Nexus 7000 Series Switches • Cisco NX-OS Software

File: Nexus Data Sheet

April 2, 2012 at 6:58 p.m. UTC

@Chris Proctor:

Best practice is to configure vPC between two 5000 and create port channel from one (or two) 2000 to both 5000.

April 3, 2012 at 4:20 a.m. UTC

I got new 2248 and 5548 today, and didn't see the sh fex and sh fex detail report different serial numbers. Maybe I got different firmware.

April 5, 2012 at 1:10 p.m. UTC

You forgot to mention that Fabric Extenders also can be used with a Nexus 7000-switch, just thought I'd might add that. (It sounds as if it were exclusive to 5k's).

April 10, 2012 at 10:57 a.m. UTC

There are limitations as to how many FEXs an N5K will support. This becomes an issue when you vPC down to a 2k from 2 5ks.

For instance - we have 2 Nexus 5010s and 18 2200 series FEXs. The 5010 will support 12 FEXs, so technically, if we went 1:1 with a port-channel down to the 2ks we could support 24 FEXs. If we decided to vPC down to the same FEX from both 5ks, we'd be in effect limiting the number of FEXs in our datacenter by half. Of course this may be what you want - You may not need 96 ports in a rack or have the space for 2 2200 series FEX. In that situation, I have used the vPC down to a 2k and it works great.

You just have to keep in mind (and I don't believe this is as much of an issue with the 55xx Nexus switches, as they support more 2Ks) that the configuration from both 5Ks must be the exact same in order for the FEX to function properly. That means a copy/paste of the config of each port that you configure for a particular vPC'ed FEX, or using the config sync feature. The config sync feature is pretty simple to implement so I'd recommend it. I also would recommend labeling each FEX so that you know if it's a vPC'ed FEX or just dedicated to one 5k. For instance:

5010-1# sh fex FEX FEX FEX FEX Number Description State Model Serial ------------------------------------------------------------------------ 101 Rack1-1-SHARED Online N2K-C2248TP-1GE - 102 Rack2-1 Online N2K-C2232PP-10GE - 104 Rack4-1 Online N2K-C2248TP-1GE - 106 Rack6-1-SHARED Online N2K-C2248TP-1GE - 108 Rack8-1 Online N2K-C2232PP-10GE - 109 RACK9-1-SHARED Online N2K-C2248TP-1GE - 111 Rack11-1 Online N2K-C2248TP-1GE - 124 RACK14-1-SHARED Online N2K-C2248TP-1GE - 125 RACK16-1 Online N2K-C2248TP-1GE - 145 Rack23-1 Online N2K-C2248TP-1GE -

I was encouraged against vPC'ing down to a FEX in a DCUFI class I attended, but have found that if you manage things properly it has its place.

April 17, 2012 at 1:11 a.m. UTC

Does anyone have any detailed info on the L3 capabilities of the 2200 series when connected to an 7K parent switch? All I could find is that no routing protocols can be run. I can't find any more info than that. So I'm wondering if features like IPv4/IPv6 unicast, uRPF (v4/v6), HSRP/VRRP, ACL's are supported?

April 18, 2012 at 2:20 a.m. UTC

The thing with VPC on the Nexus and FEXes: you can either VPC the FEX to both 5k, or VPC something else off the FEX. And VPCed FEXes need the config sync feature, which I've implemented a few times now and is ok once you get the hang of it, but is still a pain. I wold recommend, as the upthread poster said, uplink the FEX to an individual 5K and then run LACP on your servers, once link to each FEX.

April 18, 2012 at 11:18 a.m. UTC

I wouldn't turn off bpdus on a switch connected to a FEX. That is I wouldn't do no spanning tree. I put bpdufilter on the port channel of the downstream switch connected to the FEX.

If you have multicast take a look at the commands vpc bind vrf default and ip pim pre-build spt. Not explicitly stated as needed but you know how that is.

May 4, 2012 at 8:31 p.m. UTC

whom ever told you not to use vPC to the Nexus 2k's obviously doesn't do Data Center design. Unless you were doing etherchanneling to the Servers prior to the Nexus 5.1.3.N2.1 you would only do single-homed 5k to 2k, but now that etherchannels to servers across fex's have been supported on the new code, even this design of single-homed has been eradicated.

I've deployed a couple hundred 5k's and 400+ 2k's and I've never had to do single-homed yet.

Nexus 5k - 2k setup should be considered like a pair of 6500s with single supervisors. You want your downstream switches to have connectivity to both upstream supervisors. The difference minus the obvious with the 5k's is that you have avoid the blocking by the elimination of STP with vPC's.

Yes you could deploy the Nexus 5k 2k in any deployment, but Cisco will not tell you this as it will conflict with their own business units. I use them in DMZ's, Cores and Access depending on the Customer needs.

July 12, 2013 at 5:50 p.m. UTC

Hey Guys,

Is it possible to pre-configure FEX ports while the FEX is not actually installed on the 5k/7k??

The will connect the FEX in the future.

May 17, 2014 at 11:55 a.m. UTC

I could also not pre-config N2k connected to N7k, for N5k there is this option:

# (config)slot 1xx # provision model

October 21, 2015 at 3:04 a.m. UTC

I love this article. Thank you very much