When does VLAN pruning occur?

By stretch | Thursday, June 26, 2008 at 1:04 a.m. UTC

sgtcasey over on networking-forum.com recently posed in an interesting question: what triggers VLAN pruning? Specifically, will a switch only allow pruning of a VLAN from a trunk if it has no access ports configured for that VLAN? Or is it enough to have merely no active ports?

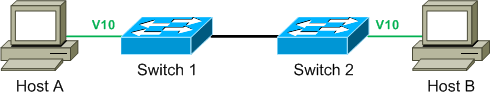

Consider a simple trunking scenario:

Switch 1 is the VTP server, and has propagated VLANs 10, 20, and 30 to switch 2. The interfaces to which hosts A and B attach are configured as access ports in VLAN 10, and an 802.1Q trunk is formed between the two switches. By examining the trunk status on either switch we can verify that VLANs 1 and 10 are being passed while the others are pruned in both directions.

S1# show interface trunk Port Mode Encapsulation Status Native vlan Gi0/1 on 802.1q trunking 1 Port Vlans allowed on trunk Gi0/1 1-4094 Port Vlans allowed and active in management domain Gi0/1 1,10,20,30 Port Vlans in spanning tree forwarding state and not pruned Gi0/1 1,10

Switch 2:

S2# show interface trunk ... Port Vlans in spanning tree forwarding state and not pruned Fa0/1 1,10

When host B is disconnected, its interface on switch 2 becomes inactive. As switch 2 has no remaining active ports in VLAN 10, VLAN 10 becomes eligible for pruning. After roughly 30 seconds pass, we can see that switch 1 is now pruning VLAN 10 from the trunk (VLAN 10 is absent from the last line of the output):

S1# show interface trunk ... Port Vlans in spanning tree forwarding state and not pruned Gi0/1 1

The VLAN remains unpruned on switch 2's end of the trunk, because it knows switch 1 still has at least one active port in VLAN 10:

S2# show interface trunk ... Port Vlans in spanning tree forwarding state and not pruned Fa0/1 1,10

Posted in Switching

Comments

June 28, 2008 at 12:43 a.m. UTC

Hey !

A little bit confused. I have tryed this example using GNS3, and I couldn't grasp the idea if host b is shutdown, should not be pruned it in the switch 2 ???

Thanks for your excellent material

July 14, 2011 at 8:13 p.m. UTC

So when does SW1 stop pruning that VLAN exactly? Once the port show a link, up and up? (I would hope) I have seen a situation where we kept losing connectivity to two devices that would communicate very little. When the problem would occur, I could see that the VLAN was pruned. The ports were active and connected, however I am guessing we had no communications and that is what led the switch to prune them.

Any Ideas?

September 13, 2011 at 1:02 p.m. UTC

Hi DanDman,

One way to end that problem is to remove that VLAN from the Pruning Eligible list using 'switchport trunk pruning remove' command.

Regards, Angela

PS: Maybe, the rough 30 seconds transition is due to STP (Max Age Timer)?

June 20, 2016 at 11:18 p.m. UTC

I realize this is an old post but wanted to comment and get feed back.

We are using more vlans / subnets now and I am considering switching to not using the specific VLAN allowed and allowing the default ALL. Adding a vlan to each port channel is very static and time consuming and not easy to automate. We still need to create the VLAN on the edge switches (vtp transparent) but this is easy to script and the same for nexus / ios.

August 23, 2016 at 5:08 p.m. UTC

Good Post !! Helped me when I got stuck in this issue while doing CCIE Lab practice.

We just need to see that "Not pruned" means the SW1 switch still has the active port on specific VLAN thats why SW2 shows that VLAN-10 is not pruned. Same time SW2 has no active port in VLAN-10 so from SW1 prospective it (SW1) will remove the VLAN-10 from "Not Pruned" means now its being pruned. The way to look at this scenario is from other end (switch) prospective. My 2 cents . Thanks