Cumulus Linux: First Impressions

By stretch | Wednesday, October 1, 2014 at 2:05 a.m. UTC

Typically, when you buy a network router or switch, it comes bundled with some version of the manufacturer's operating system. Cisco routers come with IOS (or some derivative), Juniper routers come with Junos, and so on. But with the recent proliferation of merchant silicon, there seem to be fewer and fewer differences between competing devices under the hood. For instance, the Juniper QFX3500, the Cisco Nexus 3064, and the Arista 7050S are all powered by an off-the-shelf Broadcom chipset rather than custom ASICs developed in-house. Among such similar hardware platforms, the remaining differentiator is the software.

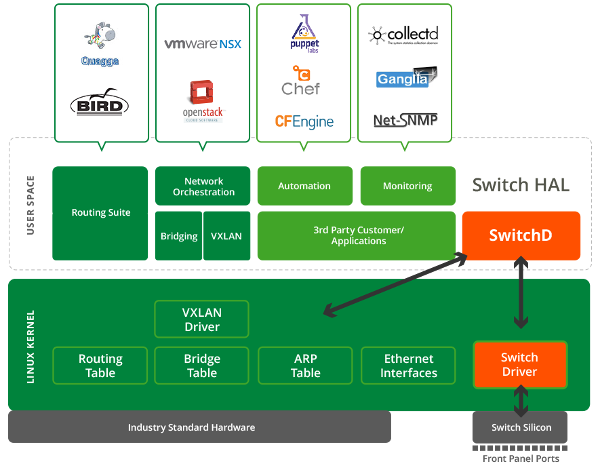

One company looking to benefit from this trend is Cumulus Networks. Cumulus does not produce or sell hardware, only a network operating system: Cumulus Linux. The Debian-based OS is built to run on whitebox hardware you can purchase from a number of partner Original Device Manufacturers (ODMs). (Their hardware compatability list includes a number of 10GE and 40GE switch models from different vendors.)

Cumulus Linux is, as the name implies, Linux. There is no "front end" CLI as on, for example, Arista platforms. Upon login you are presented with a Bash terminal and all the standard Linux utilities (plus a number of not-so-standard bits). Like any OS, Cumulus handles interactions with the underlying hardware and among processes.

The Platform

As you would expect, the software is licensed. (However, the combined cost of the Cumulus software coupled with whitebox hardware is remarkably competetive.) A license is required to activate the front-panel interfaces, which are presented to user space as swp* interfaces, starting with swp1. These appear as Ethernet interfaces normally do on Linux, visible with ip link show. (ifconfig has long been deprecated by the community in favor of the iproute2 family of tools.)

cumulus@leaf1$ ip link show

1: lo: mtu 16436 qdisc noqueue state UNKNOWN mode DEFAULT

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

2: eth0: mtu 1500 qdisc pfifo_fast state UP mode DEFAULT qlen 1000

link/ether 00:e0:ec:25:7d:43 brd ff:ff:ff:ff:ff:ff

3: swp1: mtu 1500 qdisc pfifo_fast master bond0 state UP mode DEFAULT qlen 500

link/ether 00:e0:ec:25:7d:45 brd ff:ff:ff:ff:ff:ff

4: swp2: mtu 1500 qdisc pfifo_fast master bond0 state UP mode DEFAULT qlen 500

link/ether 00:e0:ec:25:7d:45 brd ff:ff:ff:ff:ff:ff

5: swp3: mtu 1500 qdisc noop state DOWN mode DEFAULT qlen 500

link/ether 00:e0:ec:25:7d:46 brd ff:ff:ff:ff:ff:ff

...

Interfaces are configured the same as most other Linux platforms as well. For example, configuring an LACP port-channel is done with the following bit of config in /etc/network/interfaces:

auto swp1 iface swp1 auto swp2 iface swp2 auto bond0 iface bond0 bond-slaves swp1 swp2 bond-mode 802.3ad bond-miimon 100 bond-use-carrier 1 bond-lacp-rate 1 bond-min-links 1 bond-xmit-hash-policy layer3+4

The interface is then brought up manually with sudo ifup bond0. Its status can be read from /proc/net/bonding/bond0:

cumulus@leaf1$ cat /proc/net/bonding/bond0

Ethernet Channel Bonding Driver: v3.7.1 (April 27, 2011)

Bonding Mode: IEEE 802.3ad Dynamic link aggregation

Transmit Hash Policy: layer3+4 (1)

MII Status: up

MII Polling Interval (ms): 100

Up Delay (ms): 0

Down Delay (ms): 0

802.3ad info

cumulus@leaf1$ cat /proc/net/bonding/bond0 | less

Ethernet Channel Bonding Driver: v3.7.1 (April 27, 2011)

Bonding Mode: IEEE 802.3ad Dynamic link aggregation

Transmit Hash Policy: layer3+4 (1)

MII Status: up

MII Polling Interval (ms): 100

Up Delay (ms): 0

Down Delay (ms): 0

802.3ad info

LACP rate: fast

Min links: 1

Aggregator selection policy (ad_select): stable

System Identification: 65535 00:e0:ec:25:7d:45

Active Aggregator Info:

Aggregator ID: 1

Number of ports: 2

Actor Key: 17

Partner Key: 17

Partner Mac Address: 00:e0:ec:25:7d:af

Slave Interface: swp2

MII Status: up

Speed: 1000 Mbps

Duplex: full

Link Failure Count: 1

Permanent HW addr: 00:e0:ec:25:7d:45

Aggregator ID: 1

Slave queue ID: 0

Slave Interface: swp1

MII Status: up

Speed: 1000 Mbps

Duplex: full

Link Failure Count: 2

Permanent HW addr: 00:e0:ec:25:7d:44

Aggregator ID: 1

Slave queue ID: 0

Similarly, we can configure switch ports as part of a bridge group (analogous to a VLAN or SVI interface):

auto swp5 iface swp5 auto swp6 iface swp6 auto vlan100 iface vlan100 bridge-ports swp5 swp6 bridge-stp on

Again, we then turn up the interface. brctl displays the status of all bridge interfaces.

cumulus@leaf1$ sudo ifup vlan100

cumulus@leaf1$ brctl show

bridge name bridge id STP enabled interfaces

vlan100 8000.00e0ec257d48 yes swp5

swp6

We can use ethtool to display layer 1 and layer 2 link information:

cumulus@leaf1$ sudo ethtool swp1

Settings for swp1:

Supported ports: [ TP ]

Supported link modes: 100baseT/Half 100baseT/Full

1000baseT/Full

Supported pause frame use: Symmetric Receive-only

Supports auto-negotiation: Yes

Advertised link modes: 100baseT/Half 100baseT/Full

1000baseT/Full

Advertised pause frame use: Symmetric

Advertised auto-negotiation: No

Speed: 1000Mb/s

Duplex: Full

Port: FIBRE

PHYAD: 0

Transceiver: external

Auto-negotiation: on

Current message level: 0x00000000 (0)

Link detected: yes

Yet another utility, mstpctl, displays STP information:

cumulus@leaf1$ mstpctl showbridge vlan100 CIST info enabled yes bridge id 8.000.00:E0:EC:25:7D:48 designated root 8.000.00:E0:EC:25:7D:48 regional root 8.000.00:E0:EC:25:7D:48 root port none path cost 0 internal path cost 0 max age 20 bridge max age 20 forward delay 15 bridge forward delay 15 tx hold count 6 max hops 20 hello time 2 ageing time 300 force protocol version rstp time since topology change 137s topology change count 0 topology change no topology change port None last topology change port None

How about LLDP? Yep, there's a utility for that too:

cumulus@leaf1$ sudo lldpcli show neighbors

-------------------------------------------------------------------------------

LLDP neighbors:

-------------------------------------------------------------------------------

Interface: eth0, via: LLDP, RID: 1, Time: 0 day, 06:09:36

Chassis:

ChassisID: mac 08:9e:01:ce:dc:22

SysName: colo-mgmt-switch

SysDescr: Cumulus Linux

MgmtIP: 100.64.3.2

Capability: Bridge, on

Capability: Router, on

Port:

PortID: mac 08:9e:01:ce:dc:34

PortDescr: swp18

-------------------------------------------------------------------------------

Interface: swp2, via: LLDP, RID: 3, Time: 0 day, 05:39:46

Chassis:

ChassisID: mac 00:e0:ec:25:7d:ad

SysName: leaf2

SysDescr: Cumulus Linux

MgmtIP: 192.168.0.12

Capability: Bridge, off

Capability: Router, on

Port:

PortID: ifname swp2

PortDescr: swp2

...

None of this should look unfamiliar to anyone who spent a fair amount of time configuring networking on Linux, as none of the commands above are specific to Cumulus (although Cumulus does come packaged with a good number of custom tools).

Dynamic routing is all handled by Quagga, which offers a modal (Cisco IOS-like) configuration interface through vtysh. In the interest of automatability, Cumulus also includes their own non-modal (Juniper "set" style) configuration utility. For example, the two configurations below are identical in effect:

quagga(config)# router bgp 65002 quagga(config-router)# redistribute static

cumulus@switch:~$ sudo cl-bgp as 65002 redistribute add static

The routing table is, of course, displayed with ip route show:

cumulus@leaf1$ ip route show default via 192.168.0.1 dev eth0 192.168.0.0/24 dev eth0 proto kernel scope link src 192.168.0.11

Linux? In my Switch?

I'm not going to lie: Cumulus Linux was not immediately appealing to me. Network engineers have never been quite at peace with the idea of using Linux for traffic forwarding, even if it is running under the hood of more network devices than we care to admit. Cumulus is unapologetically Linux, and makes no effort to replicate something comfortable for the experienced Cisco or Juniper CLI jockey. Unlike most network platforms, it doesn't have a portable, atomic configuration file, and its command line experience is probably never going to be as smooth as IOS or Junos. But that's okay. Cumulus is focused on the future: network automation and orchestration.

Most customers who deploy Cumulus today rarely, if ever, configure a switch by hand. Instead, they rely on tools like Ansible, Chef, or Puppet to automate configuration tasks for them. Practically speaking, this is the only way to effectively manage a data center with hundreds of racks of gear, and that's where Cumulus has a distinct edge.

When you order a whitebox switch from an ODM, it probably won't come with Cumulus installed. It will have only a small bootloader named ONIE. The Open Network Install Environment is essentially GRUB and busybox: It provides just enough functionality to download, install, and boot a "real" operating system like Cumulus. (Note that ONIE is not specific to Cumulus: It can theoretically be used to download whatever OS you choose.) This is analogous to how PXE booting is used to automatically provision servers.

Cumulus supports optional zero-touch provisioning. I haven't played with it yet, but the idea is that you can precreate a configuration script for every device to be deployed and keep them on a file server somewhere inside your network. When a switch boots up, it downloads and runs the instructed script. With a bit of planning, it's entirely feasible to deploy switches straight out of the box into production without any interaction with the local command line, and that's pretty attractive prospect.

Cumulus is still a relatively young platform, and sure to be full of bumps and rough edges, but it holds quite a bit of promise, especially positioned as a cost-effective underlay platform for NSX or Contrail or whatever comes out next week. I'm hoping to write up some more posts on Cumulus once I've gotten to spend more time with it.

Posted in Data Center, Hardware

Comments

October 1, 2014 at 2:22 p.m. UTC

Cumulus Networks has quite a few automated labs you can follow along in their workbench. https://support.cumulusnetworks.com/hc/en-us/articles/201787566-Setting-up-a-Basic-Puppet-Lab

October 1, 2014 at 3:49 p.m. UTC

Pretty good write up.

I would love to see this automation actually working on a network

October 1, 2014 at 9:22 p.m. UTC

The front end Arista offers presents some additional (and quite useful) info compared to the vanilla Linux. For instance, regarding LACP:

A1(config)#sh port-channel 3

Port Channel Port-Channel3:

Active Ports: Ethernet13 PeerEthernet13

Configured, but inactive ports:

Port Reason unconfigured

------------------- -----------------------------------------

Ethernet3 Link down while waiting for LACP response

PeerEthernet3 Link down while waiting for LACP response

I wasn't able to find details about the state of the LACP negotiation in /proc/net/bonding/bond0 or elsewhere.

October 2, 2014 at 3:46 p.m. UTC

Just to note, other switch vendors are going that route as well. For example:

http://www.pcworld.com/article/2046976/opscode-adds-networking-to-chef-management-capabilities.html

http://puppetlabs.com/blog/puppet-labs-cisco-bring-automation-data-center-networking

Zero touch provisioning is also getting to be a thing among switch vendors. For example, Cisco has "Power On Auto Provisioning":

October 3, 2014 at 11:32 a.m. UTC

is this bash shell susceptible to shell shock?

October 3, 2014 at 4:46 p.m. UTC

This is a good write up! The discussion on value for Linux based OS is very interesting as well. For instance, it would be good to understand differentiation Cumulus Linux has from any other Linux for a network operations team. Especially given network operations team know and love their tools, how would this change or impact the tooling etc. In the end it is about dollars and cents.

October 3, 2014 at 8:06 p.m. UTC

I tried to find pricing, but every vendor tells me to contact them first.

I would be interested in PoE switches running on Linux, so I can put our VoIP product on them.

October 6, 2014 at 3:38 p.m. UTC

Can you lab this on a normal server or even esx?

March 27, 2015 at 12:01 a.m. UTC

There another product that's been quietly in the market doing this very thing. Check out Mikrotik.com

It runs on off the shelf hardware or they have their own. It's robust and support almost all of the modern routing protocols.

I've been using them for years now.

July 1, 2015 at 3:34 p.m. UTC

There are carrier equipment vendors that are using solely Linux in their boxes. Check Extreme Networks for example.