Policing versus shaping

By stretch | Wednesday, July 30, 2008 at 9:43 a.m. UTC

There are two methods to limit the amount of traffic originating from an interface: policing and shaping. When an interface is policed outbound, traffic exceeding the configured threshold is dropped (or remarked to a lower class of service). Shaping, on the other hand, buffers excess (burst) traffic to transmit during non-burst periods. Shaping has the potential to make more efficient use of bandwidth at the cost of additional overhead on the router.

All this is just dandy, but doesn't mean much until you see its effects on real traffic. Consider the following lab topology:

We'll be using Iperf on the client (192.168.10.2) to generate TCP traffic to the server (192.168.20.2). In the middle is R1, a Cisco 3725. Its F0/1 interface will be configured for policing or shaping outbound to the server.

Iperf

Iperf is able to test the bandwidth available across a link by generating TCP or UDP streams and benchmarking the throughput of each. To illustrate the effects of policing and shaping, we'll configure Iperf to generate four TCP streams, which we can monitor individually. To get a feel for how Iperf works, let's do a dry run before applying any QoS policies. Below is the output from Iperf on the client end after running unrestricted across a 100 Mbit link:

Client$ iperf -c 192.168.20.2 -t 30 -P 4 ------------------------------------------------------------ Client connecting to 192.168.20.2, TCP port 5001 TCP window size: 8.00 KByte (default) ------------------------------------------------------------ [1916] local 192.168.10.2 port 1908 connected with 192.168.20.2 port 5001 [1900] local 192.168.10.2 port 1909 connected with 192.168.20.2 port 5001 [1884] local 192.168.10.2 port 1910 connected with 192.168.20.2 port 5001 [1868] local 192.168.10.2 port 1911 connected with 192.168.20.2 port 5001 [ ID] Interval Transfer Bandwidth [1900] 0.0-30.0 sec 84.6 MBytes 23.6 Mbits/sec [1884] 0.0-30.0 sec 84.6 MBytes 23.6 Mbits/sec [1868] 0.0-30.0 sec 84.6 MBytes 23.6 Mbits/sec [1916] 0.0-30.0 sec 84.6 MBytes 23.6 Mbits/sec [SUM] 0.0-30.0 sec 338 MBytes 94.5 Mbits/sec

Iperf is run with several options:

- -c - Toggles client mode, with the IP address of the server to contact

- -t - The time to run, in seconds

- -P - The number of parallel connections to establish

We can see that Iperf is able to effectively saturate the link at around 95 Mbps, with each stream consuming a roughly equal share of the available bandwidth.

Policing

Our first test will measure the throughput from the client to the server when R1 has been configured to police traffic to 1 Mbit. To do this we'll need to create the appropriate QoS policy and apply it outbound to F0/1:

policy-map Police class class-default police cir 1000000 ! interface FastEthernet0/1 service-policy output Police

We can then inspect our applied policy with show policy-map interface. F0/1 is being policed to 1 Mbit with a 31250 bytes burst:

R1# show policy-map interface

FastEthernet0/1

Service-policy output: Police

Class-map: class-default (match-any)

2070 packets, 2998927 bytes

5 minute offered rate 83000 bps, drop rate 0 bps

Match: any

police:

cir 1000000 bps, bc 31250 bytes

conformed 1394 packets, 1992832 bytes; actions:

transmit

exceeded 673 packets, 1005594 bytes; actions:

drop

conformed 57000 bps, exceed 30000 bps

Repeating the same Iperf test now yields very different results:

Client$ iperf -c 192.168.20.2 -t 30 -P 4 ------------------------------------------------------------ Client connecting to 192.168.20.2, TCP port 5001 TCP window size: 8.00 KByte (default) ------------------------------------------------------------ [1916] local 192.168.10.2 port 1922 connected with 192.168.20.2 port 5001 [1900] local 192.168.10.2 port 1923 connected with 192.168.20.2 port 5001 [1884] local 192.168.10.2 port 1924 connected with 192.168.20.2 port 5001 [1868] local 192.168.10.2 port 1925 connected with 192.168.20.2 port 5001 [ ID] Interval Transfer Bandwidth [1884] 0.0-30.2 sec 520 KBytes 141 Kbits/sec [1916] 0.0-30.6 sec 1.13 MBytes 311 Kbits/sec [1900] 0.0-30.5 sec 536 KBytes 144 Kbits/sec [1868] 0.0-30.5 sec 920 KBytes 247 Kbits/sec [SUM] 0.0-30.6 sec 3.06 MBytes 841 Kbits/sec

Notice that although we've allowed for up to 1 Mbps of traffic, Iperf has only achieved 841 Kbps. Also notice that, unlike our prior test, each flow does not receive an equal proportion of the available bandwidth. This is because policing (as configured) does not recognize individual flows; it merely drops packets whenever they threaten to exceed the configured threshold.

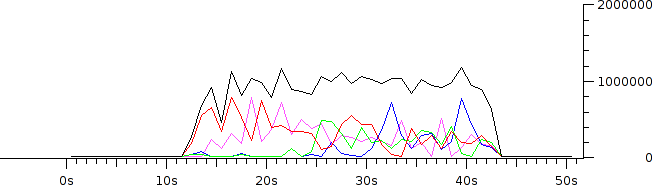

Using Wireshark's IO graphing feature on a capture obtained at the server, we can observe the apparently random nature of the flows. The black line measures the aggregate throughput, and the colored lines each represent an individual TCP flow.

Shaping

In contrast to policing, we'll see that shaping handles traffic in a very organized, predictable manner. First we'll need to configure a QoS policy on R1 to shape traffic to 1 Mbit. When applying the Shape policy outbound on F0/1, be sure to remove the Police policy first with no service-policy output Police.

policy-map Shape class class-default shape average 1000000 ! interface FastEthernet0/1 service-policy output Shape

Immediately after starting our Iperf test a third time we can see that shaping is taking place:

R1# show policy-map interface

FastEthernet0/1

Service-policy output: Shape

Class-map: class-default (match-any)

783 packets, 1050468 bytes

5 minute offered rate 0 bps, drop rate 0 bps

Match: any

Traffic Shaping

Target/Average Byte Sustain Excess Interval Increment

Rate Limit bits/int bits/int (ms) (bytes)

1000000/1000000 6250 25000 25000 25 3125

Adapt Queue Packets Bytes Packets Bytes Shaping

Active Depth Delayed Delayed Active

- 69 554 715690 491 708722 yes

This last test concludes with very consistent results:

Client$ iperf -c 192.168.20.2 -t 30 -P 4 ------------------------------------------------------------ Client connecting to 192.168.20.2, TCP port 5001 TCP window size: 8.00 KByte (default) ------------------------------------------------------------ [1916] local 192.168.10.2 port 1931 connected with 192.168.20.2 port 5001 [1900] local 192.168.10.2 port 1932 connected with 192.168.20.2 port 5001 [1884] local 192.168.10.2 port 1933 connected with 192.168.20.2 port 5001 [1868] local 192.168.10.2 port 1934 connected with 192.168.20.2 port 5001 [ ID] Interval Transfer Bandwidth [1916] 0.0-30.4 sec 896 KBytes 242 Kbits/sec [1868] 0.0-30.5 sec 896 KBytes 241 Kbits/sec [1884] 0.0-30.5 sec 896 KBytes 241 Kbits/sec [1900] 0.0-30.5 sec 896 KBytes 241 Kbits/sec [SUM] 0.0-30.5 sec 3.50 MBytes 962 Kbits/sec

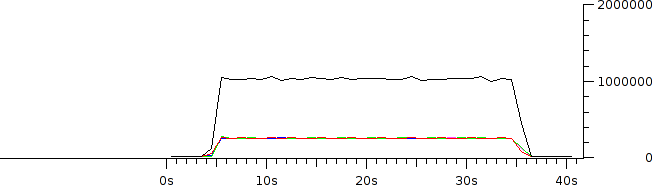

With shaping applied, Iperf is able to squeeze 962 Kbps out of the 1 Mbps link, a 14% gain over policing. However, keep in mind that the gain measured here is incidental and very subject to change under more real-world conditions. Also notice that each stream receives a fair share of bandwidth. This even distribution is best illustrated graphically through an IO graph of a second capture:

Posted in Quality of Service

Comments

July 30, 2008 at 12:20 p.m. UTC

Hi Jeremy, The post is quite interesting, May I ask "iperf" and "jperf" can only run in unix distribution only ???

Thanks

July 30, 2008 at 1:13 p.m. UTC

Interesting with a example of real traffic. Did you try the same scenario with UDP traffic ? Would be interesting if the output behaved in the same way.

July 30, 2008 at 1:45 p.m. UTC

@Lee:

Iperf and Jperf (a Java-based GUI frontend for Iperf) are available for Windows as well. In fact, this test was actually run from a Windows client.

@zlobb:

UDP traffic exhibits the same behavior, but isn't as drastically affected by policing since no windowing is used. Excessive UDP packets are simply dropped by the policer, while the client continues to transmit at the same rate. Additionally, shaping has the same effect on UDP as on TCP.

July 30, 2008 at 3:24 p.m. UTC

Hi, thanks for the tutorial. I'm having a problem with the shaping part. After applying the policy to the interface the shaping status shows as "no"

Service-policy output: shape

Class-map: class-default (match-any)

5 packets, 1469 bytes

5 minute offered rate 0 bps, drop rate 0 bps

Match: any

Traffic Shaping

Target/Average Byte Sustain Excess Interval Increment

Rate Limit bits/int bits/int (ms) (bytes)

500000/500000 3000 12000 12000 24 1500

Adapt Queue Packets Bytes Packets Bytes Shaping

Active Depth Delayed Delayed Active

- 0 5 1469 0 0 no

July 30, 2008 at 3:42 p.m. UTC

The "Shaping Active" field only displays "Yes" when shaping is actually being performed. If the load on the interface isn't at or exceeding the threshold, traffic is passed normally without any shaping taking place. Try generating some traffic out the interface to activate shaping.

July 30, 2008 at 4:13 p.m. UTC

Great write up! I am sure it has been around for a while, but I am also glad to have found the iperf tool you used here.

July 30, 2008 at 6:31 p.m. UTC

Hey awesomes!

What do you think would be ideal if one of the 2 had to applied to an internal perimeter router in a corporate network, just on the border of the DMZ? or any router in the network to curb the traffic, Let's say the number of users are around 50?

July 30, 2008 at 8:28 p.m. UTC

Excellent and concise article. Thank you very much--iperf is an excellent tool for testing QoS.

July 31, 2008 at 10:55 a.m. UTC

Hi Stretch,

I would like to lab this up in GNS3. Can you think of anyway to get this iperf traffic to flow between two loopbacks? i.e. to force the iperf traffic to flow though my virtual GNS routers?

Otherwise it will require 2 PCs for me to test it,

Cheers

July 31, 2008 at 1:26 p.m. UTC

Yeah traffic is passing when i run the Show command. I can see the packet count incrementing. I am using dynamips and vmware by the way.

August 2, 2008 at 8:41 p.m. UTC

Nice little post there. Provides a very visual indication as to both the Pro's and Con's of both Policing & Shaping.

One thing I did notice, you had left the load-interval at the default of 5 minutes. Typically on uplinks and other important links I reconfigure the load-interval to be 30 seconds to provide more of an insight into the current link utilization with the cmd "load-interval 30"

Cheers, keep up the good posts!

August 12, 2008 at 9:38 a.m. UTC

Greetings, firstly thank you for your time writing these articles they have proven to very insightful!

Just a quick question on the above, ive typically configured shaping on the interface itself using the command "traffic-shape" which works with the example below.

I have tried the above example with two 871 connected via a 3640 over an ipsec vpn and shaping or policing doesn't occur, would this be related to the use of the class-default statement or that the two hosts are seen as internal.

Regards

August 12, 2008 at 10:23 a.m. UTC

Earlier issue has been corrected with a re-boot, thanks once again for your time!

Regards

August 27, 2008 at 4:44 p.m. UTC

can we mangle traffic so we can give different speed to http traffic , smtp traffic and bittorrent traffic ?

October 14, 2008 at 7:51 p.m. UTC

very nice site :-)

June 22, 2009 at 9:46 a.m. UTC

Excellent document, may I ask if you also check/test the same configuration with the Committed Access Rate (CAR) Limiting ?

July 8, 2009 at 11:16 p.m. UTC

Hello, I used Jperf , I need understand that mean parameter UDP bandwidth, which maximum and minimum value and that influence have this into Jperf.

Thanks.

February 7, 2010 at 3:07 p.m. UTC

Does anyone know how to set the TOS byte with iperf ? I tried with the -S option but it doesn;t seem to be working (i see the 0x0 default value)

Thanks

April 21, 2010 at 5:09 p.m. UTC

@guest

The easiest and best way to set any QoS / CoS bits is to trust the router to do so. Just remember: Classify / Identify on ingress then apply classes to the marked frames / packets from that point on.

As an example:

! ip access-list extended known-sources permit ip host 1.1.1.1 any ! class-map match-all known-sources match access-group name known-sources ! policy-map set-dscp class known-sources set dscp af11 class class-default set dscp default ! interface GigabitEthernet0/0 service-policy input set-dscp !

In the example above, we use an ACL to identify a specific host we want to mark, make a class to call the ACL, then create a policy to call the class and perform an action. Note that we are taking this action on ingress only. Also, I typically reset DSCP values for unknown traffic sources to avoid problems on other routing nodes.

Then you just need to perform some action on your transit interface. Something like so....

! class-map match-all dscp-af11 match ip dscp af11 ! policy-map backbone-facing class dscp-af11 priority 1000 class class-default ! interface GigabitEthernet0/1 service-policy input set-dscp !

The only gotcha I've run across is specific to: Ethernet interfaces + VLAN sub-interfaces + sub-rate services (ie: less than link speed). In that case + some versions of IOS, a parent / child policy-map must be configured. Basically, you need a parent policy-map to identify how much bandwidth the sub-interface gets total, then a child policy to shape or policy certain classes to your desired levels. Just keep in mind that sub-rate Ethernet requires a little additional work and you should be fine.

June 20, 2012 at 9:35 p.m. UTC

Nice!

August 3, 2013 at 10:48 a.m. UTC

Nice post, we did a huge QoS implementation recently and noone had such a nice and real-feel way of showing if it actually works... Good job!

November 20, 2013 at 7:57 p.m. UTC

Hello,

I was just wondering what filter have you used to differentiate the flows in wireshark?

Thanks :)

September 12, 2014 at 5:20 p.m. UTC

Thanks for the article Stretch, would you mind doing one that expands more into depth on QoS? Maybe, touch on HQoS and its benefits?